Six steps to integrate AI into your quality engineering

In their race to adopt Large Language Models (LLMs) and increase efficiency, many organisations encounter a fundamental paradox: LLMs are, by design, creative and probabilistic. Asking the same question twice often yields two different answers—a “feature” for a poet, but a liability for a business process.

The problem facing modern industry is the “Slot Machine” approach to AI—inputting vague requests and hoping for a usable result. To transform AI from a generic chatbot into a reliable pipeline utility, organisations must move beyond simple “asking” and embrace the science of Prompt Engineering: the art of constraining AI to deliver deterministic, repeatable, and structured outputs every time.

This post is part of our Basics to Brilliance: A Quality-First Guide to Prompt Engineering whitepaper. Download the full whitepaper using the button below:

Read on to learn our structured steps to take you on that journey. It starts with a shift in your mindset:

Step 1: Shift your mindset

The journey from basics to brilliance begins with a change in perspective. Stop treating AI like a search engine where keywords suffice. Instead, treat it like a highly capable but very literal junior engineer.

A search engine requires a query; a junior engineer requires a brief.

Brilliance is achieved when you provide unambiguous instructions that ground the AI in your reality rather than the “average” of its training data.

Step 2: Establish the foundations

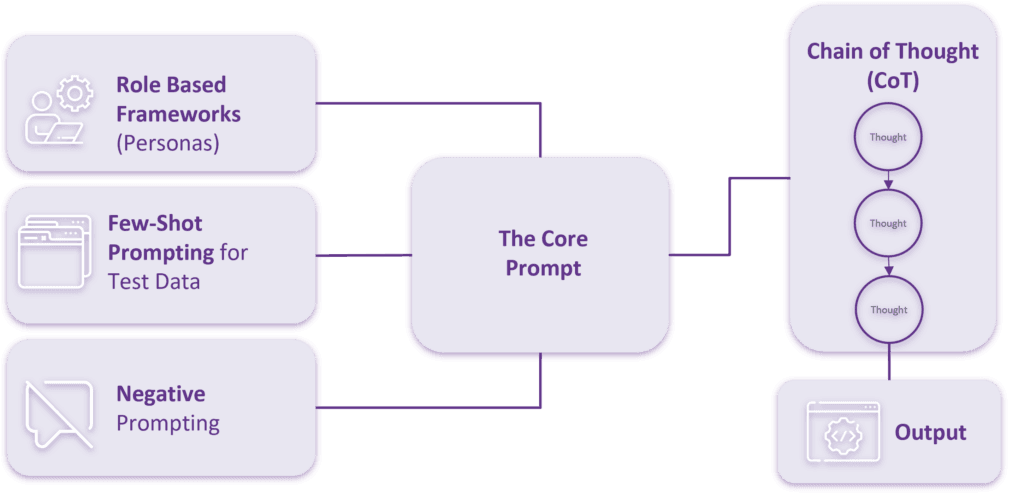

Before building a complex prompt, you must understand the core techniques that guide an AI’s reasoning:

- Role-Based Personas: Restrict the AI’s knowledge base to a specific expert field rather than the “average” of the internet.

- Chain of Thought (CoT): Force the model to follow a step-by-step reasoning process, which is essential for complex tasks.

- Few-Shot Prompting: Teach the AI by providing examples of what “good” looks like, locking in the desired pattern for the output.

- Negative Prompting: Use the “negative space” to define what the AI should not do, creating essential guardrails.

Step 3: Master the anatomy of a professional prompt

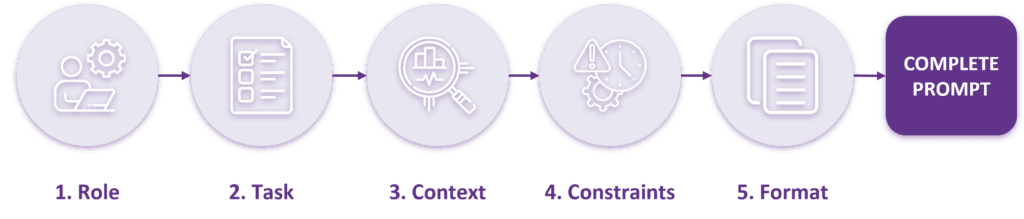

The journey from basic, inconsistent queries to consistently brilliant outcomes necessitates a fundamental shift in how we construct prompts. We must move away from intuition and embrace a modular, structured methodology. To move away from probabilistic chaos, every prompt should follow a five-part anatomy to ensure a “flawless brief”:

- Role (The Expert Persona): Never let the AI act as an average internet user. Assign a professional persona—such as a “Senior QA Automation Engineer with 15 years of experience”—to force the model to pull from high-quality, authoritative training data rather than beginner-level fluff.

- Task (The Action Engine): Replace passive words like “help me” with precise action verbs such as Analyse, Critique, Extract, or Generate.

- Context (Grounding in Reality): AI does not know your proprietary business logic. “Context” is the act of “bringing the car to the garage” by pasting raw materials like Jira tickets, API Swagger docs, or JSON payloads directly into the prompt to prevent hallucinations.

- Constraints (The Negative Space): Define what the AI must not do. Guardrails such as “do not test the backend” or “no conversational filler” ensure the AI stays in its lane and doesn’t waste its output limit on irrelevant information.

- Format (The Last Mile): Dictate the exact structure required—be it Gherkin syntax, a JSON array, or a Markdown table. This eliminates manual reformatting and makes the output immediately ready for your toolchain.

Step 4: Move to scenario-based engineering

“Brilliance” in Prompt Engineering is achieved not through complexity, but through specialisation. It is the transition from constructing one-off, general-purpose prompts to engineering repeatable systems tailored for specific business scenarios. The structural logic of a prompt must dynamically shift based on the specific business value being sought.

| Scenario | Logic Focus | Critical Components | Desired Outcome |

| Code Generation | Syntactic Rigour & Edge Cases | Strict Constraints, Environment Context | Production-ready, securely written code that passes unit tests. |

| Data Analysis | Logic-Chain & Chain of Thought | Explicit Step-by-Step Instructions, Ground Truth Context | Insightful, evidence-based conclusions with traceable steps. |

| Executive Reporting | Tone, Clarity & Synthesis | Strict Formatting/ Length Constraints, Audience-Specific Context | High-level, action-oriented summaries that distil complexity into simple decisions. |

By meticulously tailoring the structural logic and weighting the four prompt components (Context, Task, Instruction, Constraint) to the specific scenario, you transform the LLM from a generalist assistant into a highly specialised, domain-aware tool that fits seamlessly into existing workflows.

Step 5: Mitigate risk with active guardrails

The most significant barrier to enterprise-wide AI adoption is the risk profile, dominated by the threat of “hallucinations” and the potential for model misuse. Achieving professional-grade, trustworthy results demands the implementation of active, engineering-grade risk mitigation techniques:

Chain of Thought (CoT) & Zero-Shot CoT: This is a meta-instruction that explicitly asks the AI to “think step-by-step before providing the final answer.” This technique forces the model to articulate its reasoning, process logical steps, and self-correct potential errors before committing to an output. In complex tasks, CoT can reduce factual errors and logical inconsistencies by over 40%.

Context-Driven Memory (RAG/Grounding): Never rely solely on the model’s vast, but potentially outdated or non-proprietary, internal training data. To ensure accuracy and relevance, you must provide the model with “ground truth” documents, specific company data, or proprietary knowledge to reference. This Retrieval-Augmented Generation (RAG) approach anchors the output to verifiable external facts, eliminating unsupported claims.

Continuous Human Oversight (The Force Multiplier): While AI provides unprecedented speed, it is not a replacement for strategic decision-making. Rigorous quality engineering necessitates a human expert to validate and critically assess the model’s outputs, particularly in high-stakes areas like regulatory compliance or financial reporting. At Ten10, we view AI as a “force multiplier” that amplifies human capability, not a substitute for human strategic expertise.

Step 6: Embrace agentic automation

The pinnacle of Prompt Engineering—where the discipline realises its full business value—is the shift toward Agentic AI. This transcends simple, chat-based question-and-answer interactions. Agentic workflows define autonomous systems in which sequences of structured prompts drive AI “agents” that can interact with existing internal tools execute commands in CI/CD pipelines, query live databases, and initiate further actions.

In this advanced stage, prompt engineering becomes the orchestration layer. The focus shifts from asking for a singular response to defining the comprehensive parameters for an autonomous agent to observe, plan, act, and reflect—allowing it to solve complex, multi-step business problems without constant human intervention.